What is Viewbotting? How Bots are Defrauding Advertisers on Streaming Platforms

Jeffrey Edwards

Marketing

September 07, 2022

Over the past decade, live streaming has gone from a niche platform for gamers to a cultural phenomenon and streamers have gone from microcelebrities to household names with follower counts surpassing the biggest names in Hollywood.

Twitch, the predominant live streaming platform, has grown from approximately 500,000 concurrent viewers in 2016, to over 2.76 million concurrent viewers in 2021, and amassed over 24 billion hours watched in 2021.

Those extraordinary viewership numbers have enticed other media giants to enter the market–YouTube, Facebook, and Instagram all now offer live-streaming capability.

In this online economy, views are money, and where money goes, fraud often follows.

In the shadow of the billion-dollar streaming industry is a booming marketplace for fake views generated by view bots and viewbotting services. These bots are cheap and easy to use, and for unscrupulous streamers, the reward often outweighs the risk.

What is viewbotting?

Viewbotting is a form of invalid traffic (IVT) in which pieces of automated software (bots) are used to view streaming videos or live streams in order to artificially boost the view count.

Most view bots are simple scripts that open a video in a headless browser, but more complicated viewbotting services may also create fake accounts to mimic logged-in viewers and incorporate a chatbot capability that will spam the stream’s chat or comments section with artificial banter to make audience numbers appear more legitimate.

Why do creators use View bots?

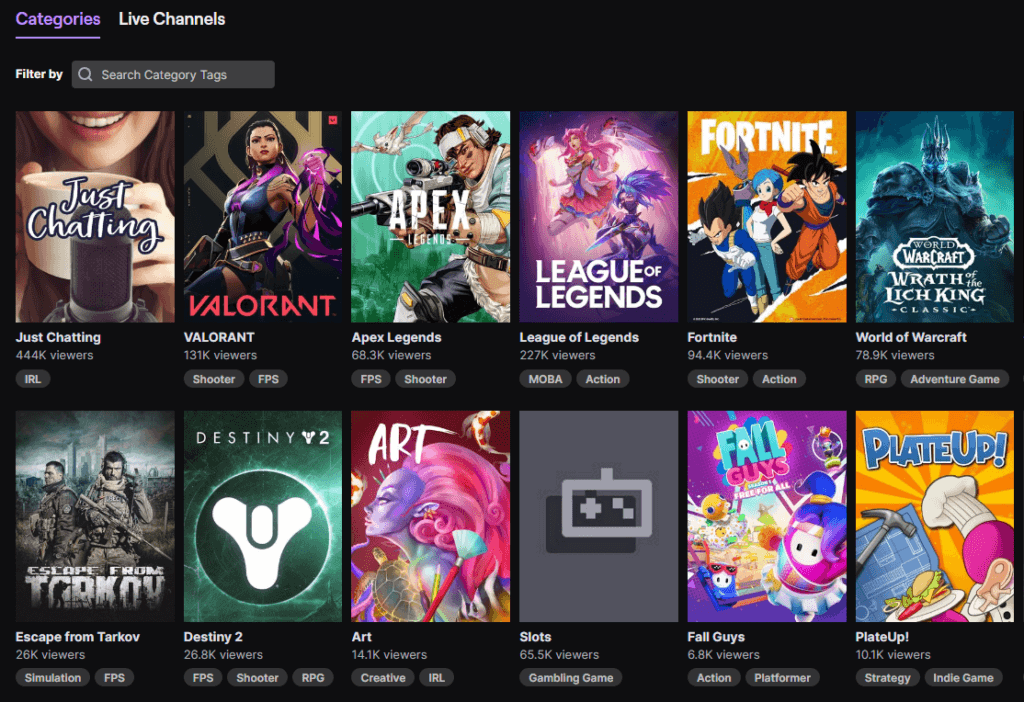

Alongside subscribers, views are one of the top metrics for success in the social media economy. Views determine whether a video is monetized on YouTube, where a video will rank in search results, and also act as a form of social proof–users are much more likely to click on a video that has lots of views.

Basically, the more views a YouTuber or Twitch streamer gets, the higher their earning potential.

To make matters worse, most streaming sites operate on the kingmaker system, promoting streams that have the most views and engagement at the top of their directories and search results. For newbie creators, this system can make it particularly difficult to break out and find viewership as they find themselves stuck streaming to small audiences, without any chance to be promoted by the algorithm.

With all of these factors in mind, it’s easy to imagine how tempting it can be for creators to use view bots to boost their viewership numbers and incite streaming platforms to promote their content.

It also doesn’t help that view bots are relatively easy to set up and use, and that it’s easy to have a layer of plausible deniability in using them, more on that later.

How does viewbotting work?

Most viewbotting is carried out either by viewbotting scripts that a user sets up themselves, or by paid viewbotting services that offer thousands of views for low prices. Let’s break it down.

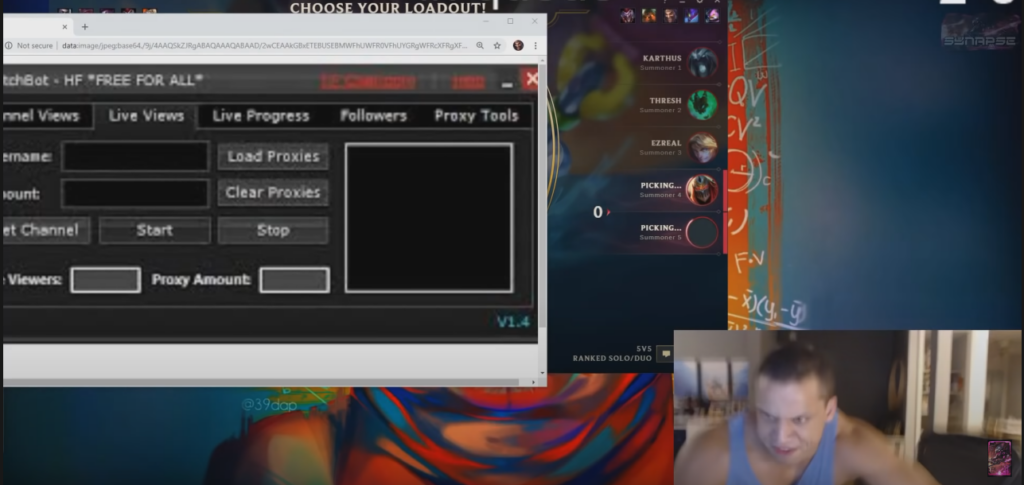

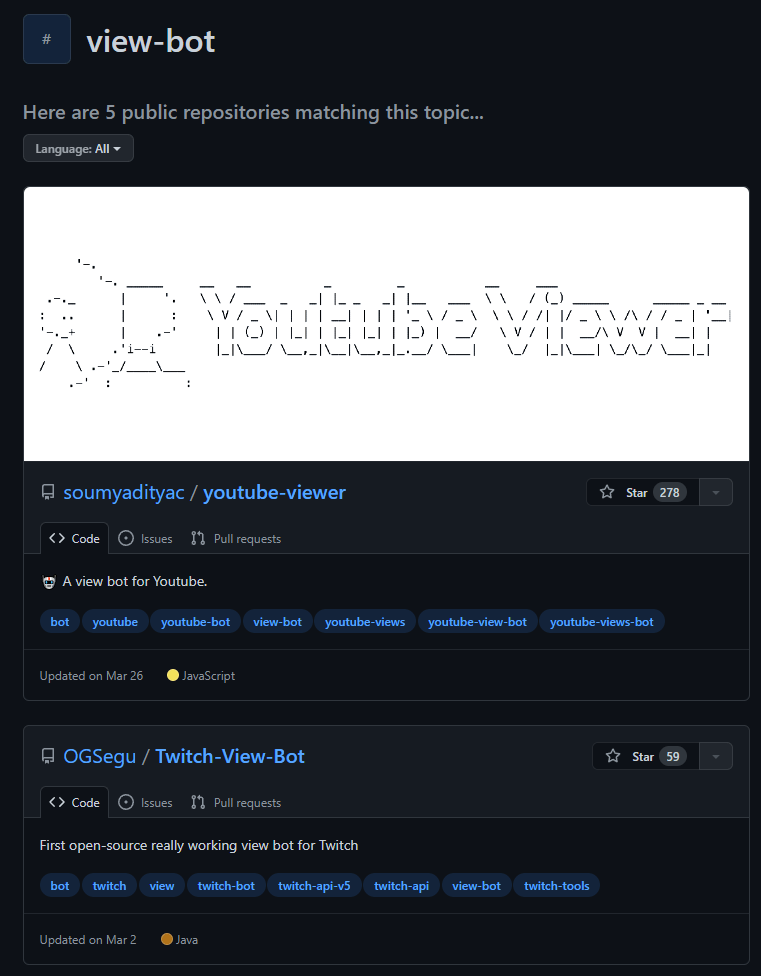

Viewbotting scripts

Technically savvy streamers can easily write a script to run a headless browser to open a stream or video for a certain duration, thus creating a view. This can be scaled up by hosting hundreds or even thousands of instances on a cloud service such as AWS.

Viewbotting services

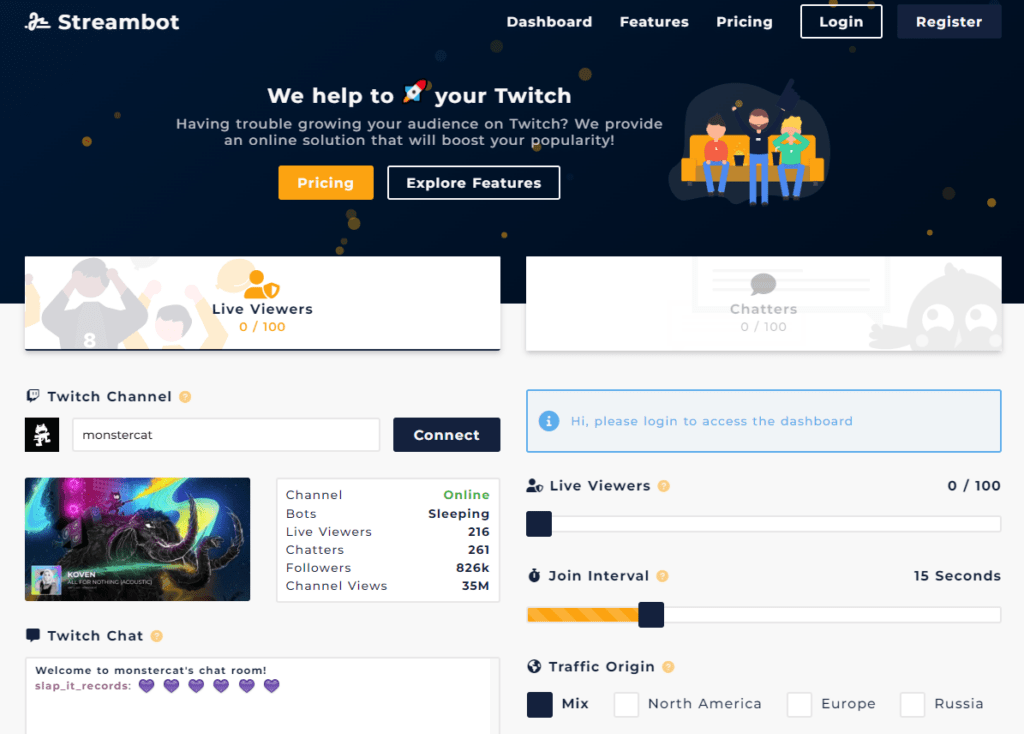

For streamers unable or unwilling to create their own view bot scripts, there are dozens of viewbotting services that offer thousands of views for low prices, often with free trials available to entice curious streamers.

Viewbotting services offer thousands of bots that will view your stream for a monthly cost as low as $10. These services offer a higher level of control than rudimentary scripts, such as the capability to add or remove viewers instantly, set viewer join and leave intervals, and choose the region from which “viewers” originate. Using view bot services is against the terms of service of major streaming platforms like Twitch and YouTube.

Malicious viewbotting

While not exactly a type of viewbotting, malicious viewbotting is also worth mentioning.

Malicious viewbotting is when people send bots to a stream other than their own, in an attempt to get the streamer banned from Twitch, or simply lower their credibility. This technique is so common that Twitch provides a FAQ for streamers to deal with it.

Malicious viewbotting is unlikely to result in a ban for the targeted streamer, but Twitch recommends reporting instances right away.

Perhaps the largest impact of malicious viewbotting is that it creates an attribution problem for Twitch, which may have difficulty discerning whether a user is viewbotting their own channel, and deserving of a ban, or is the victim of malicious viewbotting.

How to spot view bot use

Spotting unsophisticated viewbotters is not particularly difficult if you know what to look for. Typically, a simple view bot is just that: a bot that views videos. As such, they won’t increase any other metrics, such as engagement, chat activity, or subscriber numbers. If a streamer or content creator routinely gets high viewership numbers with relatively low engagement and subscribership, it’s a fair sign that foul-play could be involved. A formulaic, generic chat with repeated comments like “awesome stream” and “love this” is also a dead giveaway.

How viewbotting hurts go-to-market efforts

Not only is viewbotting a dishonest way to get ahead on streaming platforms and unfair to other streamers who worked hard to build an audience, it also degrades the value of the platform for advertisers.

Like other fake engagement techniques, such as click fraud and lead-gen fraud, viewbotting is a form of Ad Fraud. Essentially, viewbotting steals from advertisers who paid for placement that was ostensibly supposed to reach real humans, not bots.

Under the Twitch Partner Program, high-profile streamers can earn revenue from ads displayed on their channel in the form of a commission for every ad impression. If those impressions are generated by bots, not real people, then the ad budget used to create and place those ads has essentially been wasted.

If it costs $2000 for 100,000 impressions on YouTube, and 15-20% of those impressions are fake, that’s $150-200 wasted. Considering most YouTube advertising campaigns measure impressions in the millions, the costs of those fake impressions can add up fast. Budget is also used faster when it’s wasted on fake impressions, causing brands to potentially miss out on opportunities with genuine customers.

And if advertisers aren’t vigilant in weeding out bot impressions, it’s possible to waste even more budget on remarketing efforts, sending good money after bad bots to retarget “viewers” that never existed in the first place.

But the waste doesn’t end there. False impressions also make their way into advertisers’ data, skewing their metrics and tainting the data used to make further advertising decisions.

Can you block viewbotting?

Unfortunately, since viewbotting takes place on streaming services, such as Twitch and YouTube, it’s up to those services to fight viewbotting and hold fraudsters accountable. Luckily, major streaming platforms are taking the problem very seriously, and have taken steps to ensure quality viewership and punish those who would cheat to get ahead.

Twitch actively monitors for viewbotting, and will indefinitely ban any user caught using view bots to boost their own streams. And though malicious viewbotting complicates this policy, the company has had some success: Twitch banned over 15 million bot accounts last year.

The live-streaming giant has also successfully sued multiple creators of view bots, putting pressure on companies operating in the quasi-legal bot services market.

And while you can’t block viewbotting on the native platform, you can block bot traffic from Google, Facebook, or YouTube campaigns using go-to-market security tools.

The high cost of ad fraud and fake Traffic

This kind of invalid traffic is a far-reaching problem that has grown to affect every corner of the internet and shows no signs of slowing down.

In our recent research report, we found that, on average, 22.3% of unique site visits across all industries are bots. But the gaming industry blew that average out of the water with an astounding 66% invalid traffic rate on unique site visits.

Audience pollution at that level can have major impacts on marketing data, efficiency, and even the bottom line. If 66% of site visitors are bots, then two-thirds of your marketing data is contaminated, and it’s impossible to make good marketing decisions based on bad data.

And beyond wasting time, those fake users, views, and ad clicks have real financial consequences. Advertisers are estimated to lose $68 billion in wasted ad spend by the end of 2022, according to Juniper Research.

Protect Your Pipeline with Go-to-Market Security

Considering that 41% of all web traffic is invalid traffic, when you advertise on any video-based platform–or any web-based platform, for that matter–chances are good that your ads will be exposed to bots (view bots included, of course).

Knowing which impressions or clicks are real potential customers and which are bots, fraudsters, or bad actors is critical to the integrity of your campaigns, data, and go-to-market efforts writ large.

To fight viewbotting and the fake web, marketers need tools that provide end-to-end protection for their go-to-market efforts and customer journey. That’s where CHEQ can help.

CHEQ’s bot mitigation engine uses advanced fingerprinting and multi-layered security models to monitor and block bot activity so you can monitor the authenticity of all traffic entering your funnels and pipelines, whether via paid marketing campaigns, organic search, affiliate programs, or any other method.

With CHEQ, marketers can be confident of the integrity of all data flowing through their advertising audiences, campaign analytics, CRM, DMP, CDP segments, and business intelligence systems, and rest assured that decisions are made on real, solid data.

Within two months of partnering with CHEQ, the UserWay team reduced their unqualified traffic by 56% and redirected their ad spend towards real businesses seeking solutions for digital accessibility.

To learn more and find out what’s real (and what’s not) in your funnel, get started with a demo of CHEQ today.