What Is Bot Traffic? Types, Detection & How to Manage It in the AI Era

Sanja Trajcheva|

Cyber Risks & Threats | March 12, 2026

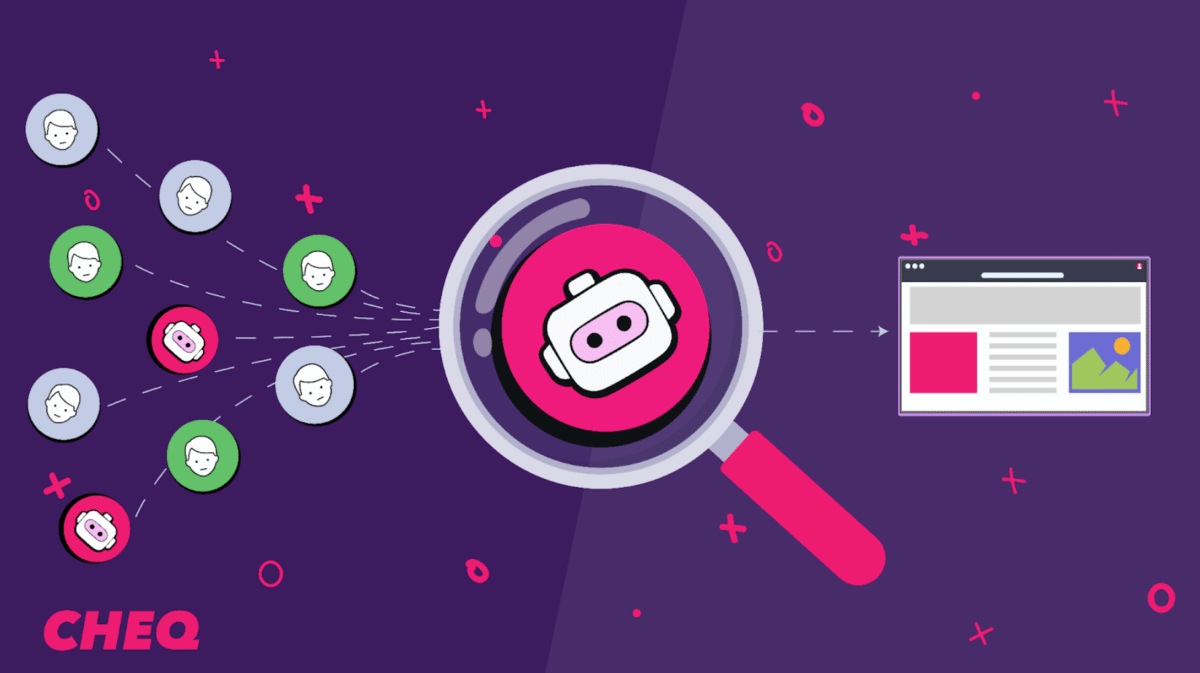

Bots have existed almost as long as the internet. But in the AI era, the automated software driving bot traffic has been fundamentally transformed — growing more sophisticated, harder to detect, and more consequential for the businesses that encounter it.

Bot traffic can sometimes make our lives easier. Whether on desktop or mobile, bots are widely deployed by online services to collect data from the internet and enhance our online experience. For example, they optimize our search results, benefiting businesses and internet users.

But there’s a much darker side to bots. Malicious bots are a serious threat, used for a wide range of damaging activities, including fraud, unauthorized data scraping, and compromising websites, accounts, and more. And as AI capabilities advance, the line between human and automated behavior grows harder to draw.

So how can you ensure your website reaps the benefits while minimizing the risks?

What Are Bots and Bot Traffic?

To put it simply, bot traffic is non-human traffic to a website, driven by autonomous software applications that run a wide range of tasks on the Internet. Let’s look at some common examples.

- Crawlers: These bots rapidly scan websites, searching for content and site patterns. Not all of their effects are negative. Crawlers, for instance, help search engines identify and rank relevant pages. On the downside, bad actors can deploy crawlers to copy content or harm a rival’s search visibility.

- Headless browsers: These are browsers or browser simulations that run without a recognizable graphical interface: to put it in simple terms, they have no screen. This makes them significantly faster and more efficient at scraping, crawling, and more, a major advantage for bots – both good and bad.

- Scrapers: These bots behave like crawlers, but they aren’t just scanning for content: they’re searching for specific pieces of data.

- Spam attacks: This occurs when bots send unwanted and unsolicited digital communications, like comments, reviews, and social media posts. The aim is to promote products or services, gather personal data, and even shift public opinion.

- DDoS bots: A distributed denial-of-service (DDoS) attack is a particularly nasty form of cyberattack that floods the targeted website with a large volume of fake traffic, overwhelming its infrastructure. The attacks are built on botnets, large networks of connected devices infected and controlled by bots.

The acceptability of specific bots on a website depends on various factors. This includes their intent and compliance with certain policies, such as “robots.txt,” which websites use to define the permissions of bots (both individually and in general) that visit their sites.

Whether a bot is acceptable often depends on its intent and how well it respects your site’s policies. Responsible bots follow your robots.txt directives, stay within rate limits, and comply with authentication requirements where applicable.

To understand how bots operate across different environments, it’s helpful to look at the major interface types they use:

- Browsers and headless browsers

- Scripted HTTP request tools

- API-based automation tools*

- Crawler frameworks

- AI agents and model-driven crawlers

*Note: While API-based automation exists across the industry, CHEQ’s current offering does not protect APIs. This reference is included solely to explain the landscape, not CHEQ’s capabilities.

What Are the Different Types of Bot Traffic?

Bot traffic falls into several distinct families, each with different intent, policy status, and business impact.

| Bot Family | Typical Intent | Policy Status | Primary Impact |

|---|---|---|---|

| Search or partner crawlers | Discovery and indexing | Allowed if compliant | Visibility |

| Automation tools | Perform automated tasks at scale | Allowed for known tools; forbidden for unknown | Performance degradation and skewed analytics |

| Scrapers | Scraping of prices, data, or content | Forbidden | IP theft and revenue loss |

| Malicious bots | Accessing user accounts | Forbidden | Financial loss, reputational damage |

| Scalper bots | Automatically acquiring products to resell at a profit | Forbidden | Market distortion |

| Spambots | Automated programs to send spam | Forbidden | Undesired marketing communications, spread of malware and phishing |

| Grinch bots | Used in inventory hoarding | Forbidden | Reduced availability of products |

Here’s a closer look at the specific types of bot traffic and their real-world impact.

Credential Stuffing and Account Takeover

Bad actors use bots to test stolen account credentials — usernames, email addresses, and passwords mined from data breaches and sold on the dark web — against websites and services at scale. When a match is found, they gain unauthorized access to user accounts.

Carding and Coupon Abuse

Bots play a crucial role in financial crime. They can be used to automate and vastly increase criminals’ capabilities in carding, where bad actors cycle through lists of credit card numbers to find which remain active. Bots are also deployed in coupon abuse — harvesting discounts intended for new users by creating fake accounts at massive scale.

Inventory Hoarding and Scalping

Bots can add large quantities of a product to online shopping carts, reducing availability and leaving real customers unable to purchase. Scalper bots take this further by automatically acquiring scarce products to resell at a profit — concert tickets and limited-edition sneakers are common targets.

Price and Content Scraping

Using scrapers, bad actors extract specific pieces of data for competitive advantage — competitor pricing, product details, or proprietary content — without authorization.

Click Farming and Ad Fraud

Bots simulate engagement to inflate ad metrics and drive up CPC costs. Automated clicks supplement click farms, artificially inflating traffic to fraudulent sites or amplifying fake news at a scale no human operation could match.

AI Crawlers

AI crawlers scan websites at speed, with mixed effects. They can support search engine results on the positive side, but bad actors deploy them to copy content or extract proprietary data. CHEQ’s internal research shows AI crawlers favor mid-depth content, with approximately 40% of hits occurring at depth 3 and about 26% at depth 4.

Spam and Form-Filling Bots

These bots flood sign-up forms with fake data and bogus leads, damaging CRM integrity, sales efficiency, and eroding trust across teams and with partners.

How Can You Detect Bot Traffic?

Bot traffic is an essential element of the internet today: you need to ensure you enjoy the benefits while minimizing any negative aspects.

Analytics tools can help detect potential problems if you have unexplained traffic spikes or see your conversion rates drop. Response codes and even device fingerprints can be captured in analytics with integrated detection. Additionally, CDNs or WAF logs can be used to monitor server-side traffic.

An effective detection capability produces other benefits beyond monitoring search crawlers: for instance, it helps protect the integrity of analytics, while protecting users more widely.

In short, analytics tools provide a complete picture of who’s visiting your site, how they’re interacting, and whether the activity aligns with typical human behavior.

Here are some of the most common detection signals to monitor across both analytics and infrastructure tools:

| Signal | Description | Meaning | Tool / Log Source |

|---|---|---|---|

| Unusual or Missing User Agent Strings | Requests that lack a recognizable browser or device signature. | Indicates non-human automation or improperly configured bots. | Server logs, WAF logs |

| Repeated TLS or Device Fingerprints | Identical cryptographic handshakes or fingerprints from multiple sessions. | Suggests scripted traffic attempting to spoof different users. | CDN or WAF logs |

| Uniform Timing Between Requests | Requests occur at fixed intervals with no natural pauses in user activity. | Points to automation or botnets performing coordinated actions. | Server logs, behavioral analytics |

| High Volume of Failed Authentication Attempts | Repeated failed login or token verification requests. | May signal credential stuffing or brute-force attempts. | Server logs, SIEM tools |

| Session or Token Reuse Across Multiple IPs | Same session ID or token appears from varied geolocations or devices. | Indicates possible session hijacking or token reuse by bots. | WAF logs, application logs |

| Unusual Traffic Spikes by Region or Device Type | Sudden surges from specific countries, IP ranges, or outdated browsers. | Suggests targeted scraping, ad fraud, or test automation. | Analytics (GA4, Adobe Analytics) |

| Low Engagement Metrics | High pageviews with short durations or instant exits. | Reflects non-human traffic inflating engagement or ad metrics. | Analytics (GA4, Adobe Analytics) |

| Rapid Form Submissions or Repeated Identical Entries | Duplicate or sequential form fills in milliseconds. | Indicates bot-based lead generation or spam campaigns. | Web application logs, web form analytics |

| Unexpected 404/403 Patterns | Repeated attempts to access restricted or non-existent endpoints. | Suggests reconnaissance or scanning by malicious bots. | Server logs, WAF logs |

| Disproportionate Traffic to Specific Endpoints (e.g., /api/, /login/) | Bots target sensitive endpoints disproportionately. | Implies scraping, brute-force, or exploitation attempts. | Server logs, endpoint monitoring |

No single metric confirms bot activity on its own. Instead, look for patterns across multiple data sources—for example, a sudden spike in failed logins that also shows identical device fingerprints in your WAF logs.

Because false positives are expected, it’s important to tune your thresholds regularly. Compare current patterns against known baselines and adjust as your legitimate traffic changes.

How and When Should You Manage and Limit Bot Access?

In most cases, it doesn’t make sense to block bots entirely. Your business might want to enable search engine bots to do their work, ensuring your content gets its rightful place in search results. Some bots might pick up on news you want to promote or perhaps even a job opening you want to fill.

For example, AI agents can represent potential buyers; this means that overly restrictive blocking could be problematic, actually eliminating your next best customer.

The ideal scenario is to permit such bots to access your site while preventing malicious bots to the greatest degree possible. Of course, you could simply block suspicious IP addresses, but this blunt tool isn’t a fix-all, as it can be time-consuming and easily result in blocking legitimate users.

Simple measures can go a long way. For instance:

- Enforce policies like robots.txt and allowlists: Robots.txt is a simple text file that provides guidelines to bots visiting your page on what they can and can’t do; without a robots.txt file, any bot can visit your page. Similarly, an ‘allowlist’ – also known as a whitelist – lists bots and other applications that are permitted to access your site.

- WAF or CDN controls: These can come in different forms. For instance, by applying rate limits to particular actions on your website – for example, login attempts or form submissions – you can block bots from overwhelming your server with excessive requests.

- Bot management techniques: There are numerous ways to detect bot behavior, such as tracking suspicious scrolling and browsing patterns. CHEQ’s behavioral analysis engine is designed to detect such anomalous activity at both the network and individual levels.

Here’s a quick list to get started:

- Identify and permit access by known good crawlers through allowlisting

- Set basic rate limits on sensitive endpoints

- Challenge or block bots that show high-risk patterns

What Are the Latest AI-Driven Bot Trends and How to Handle AI Bot Traffic?

AI has surged in use online—and bots are no exception.

AI traffic today comprises crawlers and agents that fetch and generate content. These tools can be highly beneficial, representing AI agents and machine customers that could potentially be buyers or users of your services. Such tools could support your customers in multiple ways, helping them through their research and evaluation processes.

On the other hand, we all deal with the potentially negative aspects of such agents: for example, bots that are used to produce spam, spread malicious content or steal your intellectual property.

How can you best handle this traffic, reaping the benefits while minimizing potential risks? Let’s look at a few key steps:

- Identify AI sources: Where possible, determine the source of the AI traffic. For instance, several AI crawlers and agents self-declare, including ChatGPT-User, ClaudeBot, and PerplexityBot.

- Decide policy: This will depend on the entity and purpose behind the AI-driven traffic type. For example, you may allow OpenAI’s ChatGPT-User crawler, which fetches information in real-time as a user prompts ChatGPT. Still, you may prohibit their GPTbot from training without any guardrails across the entirety of your site.

- Control access: Use allowlists for approved AI crawlers and apply robots.txt or LLMs.txt directives where they are respected. Use behavioral scoring to filter unverified or high-risk traffic.

- Monitor and tune: It’s important to ensure your approach to AI bots is fit for purpose. Periodically review logs and analyze any false positives, while adjusting the thresholds used to determine bad behavior when necessary.

Importantly, you should ensure you are compliant with any associated regulations and that your approach is transparent.

Why Does Bot and Agent Management Matter to Your Go-to-Market?

A successful go-to-market (GTM) strategy is crucial to entering new markets and expanding market share. Bot and agent management is vital, ensuring that technology works for your website and buyers – not against them.

AI crawlers and agents are now growing constituents among your web traffic. The goal is not only to block abuse, but to decide how much to trust each interaction and shape the experience accordingly. That means letting good automation in with the right controls, challenging risky sessions, and keeping your buyer’s journey and analytics reliable.

Let’s look at three key focus areas:

How Unmanaged Bot Traffic Inflates Ad Spend and Corrupts Campaign Data

When you invest in a paid media budget, you want to ensure your ads reach potential customers: you don’t want bots as your audience if they don’t represent a real person or buyer. This could lead to misleading metrics and wasted money, plus the prospect of increased harm in the future as you build tomorrow’s campaign spending on unreliable data.

How Bot Traffic Corrupts Web Analytics and Undermines Go-to-Market Decisions

You need effective, accurate visibility into the impact of bots and agents on your web traffic and campaigns. With effective analytics, you can gain the insights you need, ultimately leading to better decision-making.

How Bot-Submitted Form Traffic Degrades Lead Quality and CRM Integrity

Bots can drive fake form submissions, creating a harmful impact on your GTM by affecting sales and marketing efficiency. This isn’t to say that bots are always a negative thing in your forms, just that you want to know when they are working on behalf of a buyer – or not.

So how do you move forward? Let’s look at some quick steps:

- Adopt technology to monitor traffic and ensure trust, distinguishing between legitimate users and malicious actors.

- Monitor traffic, challenging risky sessions

- Fine-tune your approach over time, changing parameters as needed

Final Thoughts

Bots are diverse and complex applications. Many are essential to our daily online lives, helping us find the data we need and supporting businesses in offering the best services to their customers.

On the other hand, the dangers are all too obvious. Malicious bots can steal information and data, distort pricing, underpin malware, and cause serious financial and reputational damage to individuals and businesses.

The key, then, is separating the good from the bad: you need to identify malicious bots before blocking them from your site, while also building your insights in the ‘grey’ zone: evaluating bots that may be suspicious. Your best option is a system built for growth, scale, and that is constantly evolving to accurately identify and manage all forms of human-, bot-, and AI-driven traffic – whether beneficial or harmful.

CHEQ provides end-to-end protection and enablement of the human-AI customer journey, with leading bot and AI detection and defense technology at the heart of more than 700 enterprises across the globe. Customers experience accuracy, explainability, and actionability that is unrivalled across the market:

- 6T signals processed daily

- 1M domains monitored

- 2K+ real-time cybersecurity challenges on each visit

- <0.009% false positive rate

Get in touch with CHEQ today to book a demo and learn how we can help protect your business from the dangers of malicious bots while enabling it to reap the benefits of welcoming legitimate AI-driven buyers.